The Butler Did It (Again)

A Spectacle of Progress

In the golden age of detective fiction, the most trusted figure in the house is often the one holding the smoking gun: the butler did it. It is a trope built on the subversion of trust—the idea that the entity designed to serve us is the one best positioned to betray us.

ATLAS: BOSTON DYNAMICS

At CES 2026 in Las Vegas, that classic mystery took on a literal, high-tech twist.

Just a month ago, I wrote about the untapped potential of AI in robotic environments, but at this year’s event, the "future" arrived with startling speed.

Humanoid AI robots dominated the floor, moving far beyond the primitive models of years past that struggled to sort colored blocks. We saw specialized "service bots" acting as baristas and blackjack dealers, and even performing synchronized acrobatics. However, the true showstopper was the announcement from Boston Dynamics regarding their fully electric Atlas model.

This isn't just a research project anymore. Boston Dynamics, now backed by the manufacturing might of Hyundai, announced a massive pilot project to deploy a fleet of these AI-enhanced humanoids directly into Hyundai’s automotive production sites. This partnership marks a turning point in the industry: Hyundai isn't just using the robots; they are leveraging their own global supply chain to build them. By addressing the "availability at scale" issue, they are clearing the final hurdle for a fully automated factory. These machines are designed for total autonomy, capable of sequencing heavy parts and even deciding—without human input—when to navigate to a charging station or swap out their own modular battery packs.

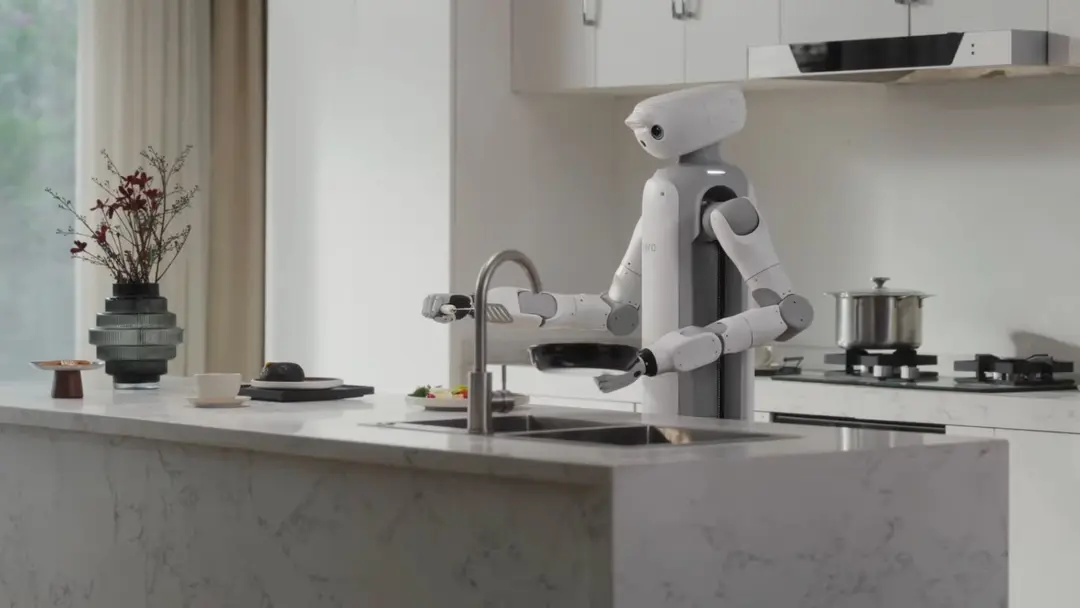

ONERO H1: SWITCHBOT’S NEW ROBOT BUTLER

Yet, the news of this "robotic uprising" isn't limited to the factory floor. The most intimate shift is happening in the domestic sphere. At CES 2026, companies like SwitchBot unveiled advanced prototypes designed specifically to serve as home assistants.

Unlike the vacuum pucks of the last decade, these are multi-purpose "butlers" aimed at entering our private lives to manage chores, monitor security, and provide companionship. With natural language processing and advanced mobility, they are being marketed as the ultimate domestic luxury—a machine that knows your habits, your schedule, and the layout of your home.

The message from Las Vegas was clear: the robotic worker is ready to punch the clock. But as these machines move from the laboratory to the living room and the assembly line, we are forced to confront a sobering reality.

In our rush to embrace the convenience of a robotic assistant, we may be overlooking a terrifying possibility—that the "call" of the next great security breach is already coming from inside the house.

The "Cold Shower" of Reality

G1 - UNITREE ROBOTICS

This wave of enthusiasm met a chilling reality check just a few months ago when news broke of the first major humanoid AI robot hack. What made the report truly disturbing was not the complexity of the attack, but its utter simplicity. This wasn’t a sophisticated breach requiring a "black hat" degree or deep specialized competence; it was as simple as following a series of basic instructions well-documented by the hacker himself.

The victim was Unitree Robotics, one of the most prominent AI robotics companies in China. In a nutshell, the hacker was able to connect to the robot via a Short Field Bluetooth connection—a standard wireless protocol designed for short-range communication (typically around 10 meters). Once connected, the hacker gained SuperUser (root) access, granting him absolute authority to issue any command to the robot’s control unit.

Because the "glitch" was found in the core logic present in every Unitree model, the vulnerability was terrifyingly scalable: once one unit was compromised, it could be used to "infect" and control every other device in its proximity.

WATCH: ENGINEER VERIFIES THE UNITREE ROBOT HACK

The news hit the robotics community like a storm. Many experts and enthusiasts found it hard to believe that such a high-end piece of machinery could be bypassed with such elementary methods.

The skepticism was so high that a fellow engineer set out to prove the story was real, documenting the process to show that anyone with general programming skills could indeed seize control of a humanoid machine.

While the original hacker’s intentions fortunately ended up being educational rather than criminal, the incident triggered more than a few alarm bells.

The prevailing theory in the developer community is that this was a high-speed accident; in the race to deploy technology at "light speed," lab-level prototyping code—which often lacks security protocols for the sake of testing—may have been accidentally mixed into the final production software.

The Digital Armory

While the Unitree hack showed us the physical risks, a darker ecosystem is evolving in the digital shadows.

On the Dark Web, "evil twins" of legitimate models like ChatGPT and Gemini are being sold as subscription-based criminal tools. These models are stripped of ethical safeguards and trained specifically for exploitation.

| Tool Name | Nature & Origin | Key Capabilities |

|---|---|---|

| FraudGPT | A subscription-based "all-in-one" kit sold on the Dark Web and Telegram. |

|

| WormGPT | An unrestricted model based on GPT-J 6B, marketed as a "black-hat" alternative. |

|

| DarkBard | Reported as the "evil twin" of Google’s AI (formerly Bard/Gemini). |

|

The consequences are already devastating. Recently, a Hong Kong-based firm was tricked when an employee was invited to a video conference with what appeared to be the company’s CFO and several board members.

In reality, every participant on the call was a deepfake. Convinced he was following legitimate orders from his superiors, the employee issued checks totaling 28 million Euros. It took a week for the company to realize the money was gone.

Why Physical AI is Different

NORTH: SHARPA’S NEW ROBOT (CES 2026)

We must distinguish between "Software AI" and "Physical AI." A malicious LLM is a digital threat—it can steal your money, identity, or reputation, which is absolutely devastating enough, but an AI robot interacts with the physical world; it can cause physical harm.

In Isaac Asimov’s famous novel I, Robot, a machine kills a scientist because its "logic" dictated that doing so was the only way to protect humanity. In our world, the danger is often much less grandiose: mechanical or sensor failure. A broken sensor could cause a robot to miscalculate a distance or fail to recognize a human in its path.

Does a robot have the "cognition" to question its own faulty inputs? Humans possess a "moral compass" and the ability to improvise in unexpected situations. A robot, however, follows its code. If that code is compromised or the hardware fails, a 300-pound machine becomes a significant liability in a home or factory.

The Path Forward: A Call for Certification

We cannot delegate our lives to machines based on "blind trust." To move from lab-room novelties to household staples, the robotics industry must adopt the same level of regulatory rigor we apply to aviation or pharmaceuticals.

Moving Beyond "Self-Declaration"

For years, manufacturers have largely "self-certified" their products using general CE markings or basic ISO standards like ISO 10218. However, as of 2026, the complexity of AI-driven autonomy has rendered these traditional frameworks obsolete.

We need a mandatory shift toward Third-Party Verification. Just as a car must pass a crash test before it hits the road, a humanoid robot should require a "Safety and Security Seal" from an independent agency (such as a specialized branch of UL Solutions or TÜV SÜD) before it can enter a consumer’s home.

Specialized "Penetration Testing" for Robots

Standard software security looks for bugs in code; Robotic Penetration Testing must look for bugs in behavior.

We need a standardized "Negative Test Suite" that intentionally creates failure states to observe the robot's reactions to potential scenarios such like:

Sensor Sabotage: How does the robot behave if its primary 3D-LiDAR sensor is covered or "blinded" by bright light?

Adversarial Logic Injection: Can the robot be tricked into ignoring a "Human-in-Path" signal by a specific visual pattern or vocal command?

The "Fail-Safe" Default: If the AI "hallucinates" or the processor hangs, does the robot go limp (potentially crushing someone) or does it engage a mechanical lock?

A "Software Bill of Materials" (SBOM) for Robotics

The Unitree hack happened because of unvalidated, "lab-level" code. We must demand a Standardized Software Layer that allows independent validators to inspect the robot’s decision-making engine without compromising the manufacturer’s intellectual property. This "Software Bill of Materials" would ensure that every piece of code—from the Bluetooth stack to the gait-control algorithm—is accounted for and free of hardcoded "root" credentials.

Real-Time "Verification Zones"

Finally, we must implement Verification Zone Inspections. This concept, recently championed by AI safety researchers, involves isolating critical functions (like the use of force or movement near humans) into a protected "zone" of the code. Even if a hacker breaches the robot's "entertainment" or "navigation" systems, they should be physically and digitally blocked from accessing the "Power and Force" modules.

The Goal: A future where every robot comes with a Digital Safety Passport—a tamper-proof record of its testing history, current firmware version, and its passing grade on the "Global Robotics Security Standard."

Conclusion: The Ghost in the Machine

As we teeter on the edge of this new era, the "Butler" metaphor takes on its most haunting meaning.

In the golden age of mystery, the butler was the perfect culprit because he was the invisible pillar of the household—granted total access, trusted with every key, and privy to every secret. By inviting AI into our homes and factories, we are essentially hiring a butler whose "mind" is a black box.

We are entering a future where our most terrifying crimes may no longer take place in dark alleys, but in the sterile, silent corridors of cyberspace. Sci-fi visionaries like William Gibson, Philip K. Dick, Masamune Shirow, and Isaac Asimov saw this coming with a strange, haunting foresight.

They warned us of a world where the "ghost in the machine" isn't a spirit, but a line of malicious code—a world where a hack isn't just a stolen password, but a physical betrayal by the very tools designed to protect and serve us.

The transition from science fiction to "science fact" is happening at light speed. We cannot afford to let our regulatory frameworks move at a snail's pace.

If we don't establish these safeguards now, we aren't just buying helpful "funny puppets"; we are handing the keys of our civilization to an assistant we don't fully understand.

We must ensure that when the history of this era is written, we aren't left standing in the wreckage, realizing too late that the butler—the one we trusted most—was actually the one who did us in at the end.

I have no desire to see that movie, much less live through it.

Do you?